In the age of artificial intelligence, data is not just about rows and columns anymore — it’s about context, patterns, and meaning. While traditional databases efficiently handle structured data, the rise of AI applications has introduced new data types, particularly unstructured and high-dimensional data. This is where vector databases come into play, bridging the gap between structured storage and intelligent data retrieval.

Let’s explore how vector databases differ from traditional ones, why they matter for modern workloads, and how organizations can benefit from using them.

Understanding Traditional Databases

Traditional databases — whether relational (SQL) or non-relational (NoSQL) — form the backbone of data management systems used for decades.

Relational databases (RDBMS) store data in tables with fixed schemas, ideal for structured data such as customer records, transaction logs, or inventory details. They use SQL queries to retrieve and manipulate data efficiently.

NoSQL databases, on the other hand, handle semi-structured or unstructured data formats like JSON, key-value pairs, or graphs. They provide flexibility and scalability for web-scale or distributed applications.

The core strength of traditional databases lies in deterministic querying, where you look for exact matches — retrieving a product by its ID, finding a customer by email, or filtering orders between specific dates. However, they struggle when queries demand semantic understanding rather than precise matching.

What Are Vector Databases?

A vector database is specifically designed to manage and query vector embeddings — numerical representations of data generated by machine learning models. These embeddings translate complex content like images, text, audio, or video into a high-dimensional vector space, allowing for similarity search based on meaning rather than exact values.

For example, instead of searching for the keyword “car,” a vector database understands that “automobile,” “vehicle,” or “SUV” may refer to similar concepts because their embeddings are mathematically close in vector space.

This capability makes vector databases essential for AI-driven applications such as semantic search, recommendation systems, natural language interactions, and image recognition.

Core Difference: Exact Match vs. Similarity Search

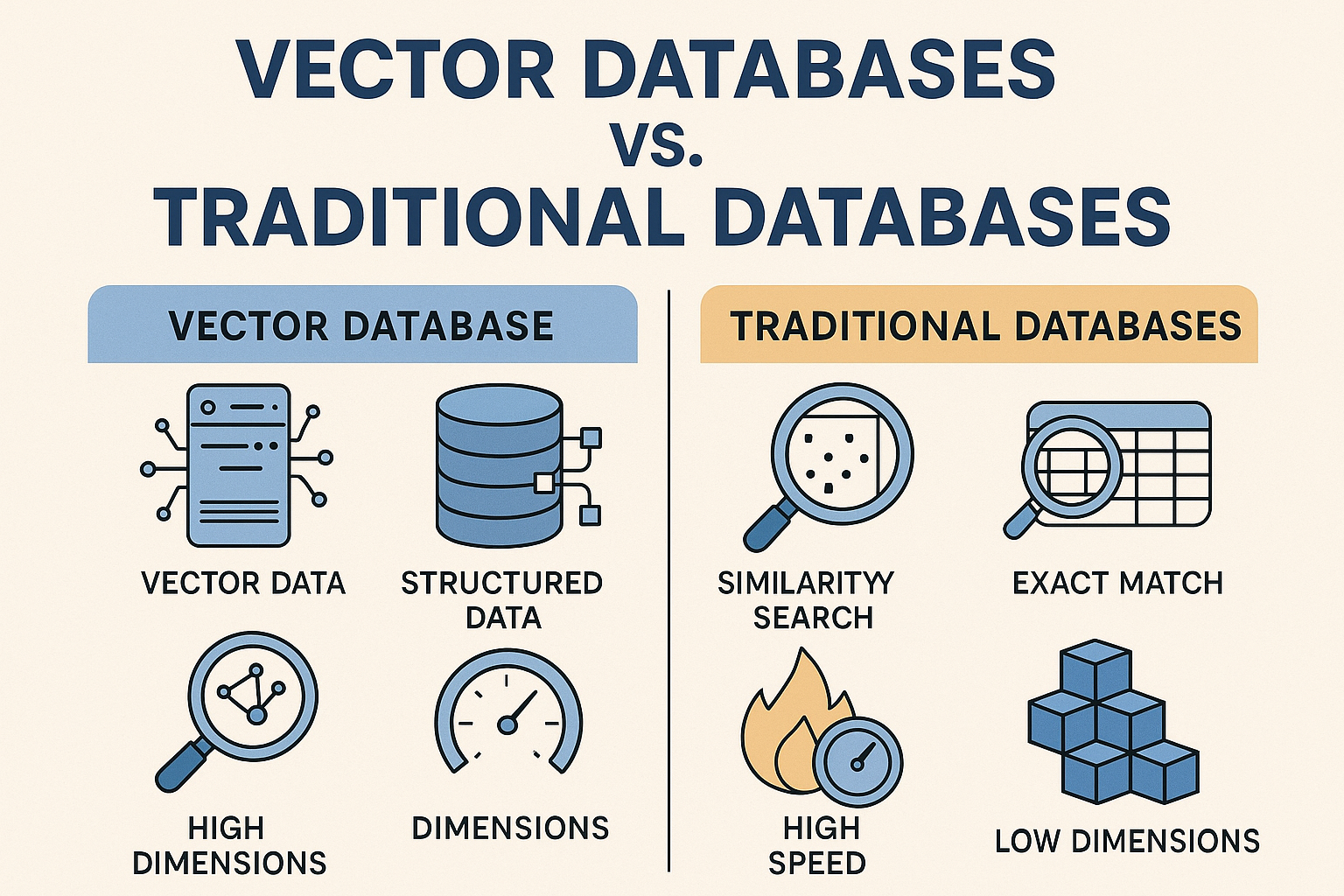

The defining contrast lies in the querying mechanism:

A traditional database relies on exact-match logic. Queries return results that meet strict equality or range conditions.

A vector database, on the other hand, uses Approximate Nearest Neighbor (ANN) algorithms to identify the most semantically similar data points in a vector space — even when there’s no perfect textual or categorical match.

This shift from syntactic to semantic retrieval enables intelligent operations across enormous datasets where contextual meaning is more valuable than direct equivalence.

How Data Is Stored and Indexed

In a traditional database:

Data is organized into rows and columns.

Indexes are built using B-trees or hash tables.

Each query navigates these indexes to find matches quickly.

In a vector database:

Data is stored as high-dimensional vectors (e.g., 512 to 1536 dimensions for text embeddings).

Specialized index structures, such as Hierarchical Navigable Small World (HNSW) graphs or Inverted File Indexes (IVF), help retrieve the nearest neighbors efficiently.

Distance metrics like cosine similarity or Euclidean distance determine how close vectors are in meaning.

This architecture supports sub-second response times for even billions of embeddings, ensuring scalability for real-time AI workloads.

Performance and Scalability

Traditional databases are optimized for transactional operations (insert, update, delete) and analytical queries (aggregations, joins). They deliver consistency, reliability, and ACID compliance, which are critical for financial, e-commerce, and enterprise applications.

AI Vector databases focus on search performance in high-dimensional spaces. Their optimization lies in fast retrieval over complex embeddings rather than transactional throughput. They use in-memory computation, vector indexing, and often hybrid storage (disk + RAM) to maintain performance under large-scale inference workloads.

In practice, both systems can coexist: a traditional database manages structured metadata while a vector database handles embedding-based search results.

Typical Use Cases

Traditional Databases:

Banking and transactional systems

Inventory and supply chain management

Accounting, HR, and ERP systems

Customer relationship object data storage

Vector Databases:

Generative AI and conversational assistants

Personalized recommendation engines

Image, audio, or video similarity retrieval

Semantic knowledge bases and RAG (Retrieval-Augmented Generation) pipelines

As enterprises integrate AI into their data ecosystems, the line between these systems often blurs, leading to hybrid architectures where both operate in tandem — the traditional database handling tabular data and the vector database managing contextual intelligence.

Integration with AI Workflows

Vector databases are becoming a crucial part of modern AI pipelines. When large language models (LLMs) process prompts, they often rely on embedding retrieval to provide contextually relevant responses. These embeddings are stored and queried through vector databases for lightning-fast access.

Traditional databases do not natively support such semantic retrieval because they lack multi-dimensional indexing optimized for vector math. However, some are now adopting plugin-based vector support or hybrid indexing techniques to stay relevant in AI-powered scenarios.

Challenges and Considerations

Despite their promise, vector databases come with challenges:

Complexity: Requires knowledge of embeddings, dimensions, and similarity metrics.

Resource demand: Vector computations are CPU/GPU-intensive.

Data update challenges: Index rebuilding can be time-consuming for large datasets.

Security and compliance: Managing sensitive embeddings demands strong governance measures.

On the other hand, traditional databases remain robust and proven for compliance-heavy, deterministic workloads, offering decades of optimization and reliability.

The Road Ahead

As data evolves from structured records to rich, unstructured contexts, vector databases mark a paradigm shift in how we perceive search and storage. They don’t replace traditional databases; they complement them.

Organizations are increasingly adopting dual-storage strategies — using traditional databases for transactional reliability and vector databases for intelligent, context-aware operations. This hybrid data stack forms the foundation of the modern AI era, where meaning matters more than mere matching.

Write a comment ...